Best Practices

- Database configuration

- Sensitive data discovery

- Encrypted connections

- Network and I/O

- Health monitoring

- Data protection

- Host security

- Automation

Database configuration

The performance of DataMasque masking is largely dependent on the performance of the target database. A poorly tuned target database will have a direct negative impact on the execution time of your masking runs. To achieve optimal masking performance, it is important to tune the target database and tables - as you would for application transaction workloads.

The following sections include recommendations for improving the performance of your target databases during masking.

Transaction logging

Data masking is a destructive operation and is performed on copies of production data. Consequently, database recovery is not a critical operational requirement on masking targets. It is therefore recommended to disable transaction logging at the start of data masking runs to improve SQL execution by minimising database I/O.

- For Oracle databases, enable

NOARCHIVELOGmode. - For Microsoft SQL databases, enable

SIMPLErecovery mode.

Warning: After masking is complete, any transaction logs on the target database should be manually purged. Lingering transaction logs may contain unmasked data in plain text.

Tip: Use a

run_sqltask before yourmask_tabletasks to manage the logging configuration of your target database.

Query optimisation

For Oracle databases, use ROWID as the key for mask_table tasks.

ROWID values are the fastest way to lookup rows. However, you should not delete or reinsert rows to the same table

during masking runs when using ROWID as key, as this may cause Oracle to reassign ROWID values.

Sensitive data discovery

To ensure complete, ongoing protection of sensitive data in your databases, it is recommended to

make use of the Sensitive Data Discovery features provided by

DataMasque. Include a run_data_discovery

task in every masking ruleset, and ensure that appropriate users have opted-in to be

notified when new sensitive data

is discovered by DataMasque.

Encrypted connections

It is recommended to use encrypted database connections with DataMasque whenever possible. For more information on configuring encryption for your database connections, see the Connections reference guide.

Network and I/O

DataMasque uses remote database connections to support databases running in on-prem and public cloud environments. This means that masking requires data to be transmitted across networks between the database server and DataMasque.

Slow networks with numerous hops between the database server and the DataMasque server can degrade performance. For best performance it is recommended to co-locate your DataMasque instance on the same network as your target databases.

Ways to improve network throughput include:

- Use a minimum 1 Gigabit Ethernet (1GbE) connection between DataMasque and target database servers.

- Ensure latency and hops from DataMasque to target database servers are as low as possible.

Health monitoring

DataMasque provides a health check API endpoint to facilitate simple integration into your existing application monitoring tooling. To learn more about using this endpoint, see the API documentation for authentication and the health check API endpoint.

Data protection

For dedicated DataMasque instances deployed on virtual machines, it is recommended to take regular virtual machine snapshots/backups.

It is also recommended to periodically save copies of your Run Logs, as well as Ruleset and Connection configurations.

Adequate security measures should also be in place to protect the DataMasque server and backed-up files. Knowledge of masking rulesets may aid an attacker's attempts to derive information from masked data, and certain secrets may enable an attacker to reproduce deterministic masking operations (See: Freezing random values).

Consistent masking

While the most secure approach to data masking (and the default behaviour within DataMasque) is to generate masked values

independently in each masking run, some use cases will require consistent masking across multiple runs (facilitated by

deterministic masking features).

If you wish to make the masked values deterministic from the same input values, and for the

same deterministic values to be generated across multiple masking runs, you can specify a

run_secret in the run options while using a hash_columns list in a rule.

Important! It is critical to store

run_secretsecurely and rotate it as frequently as possible as it is used to influence the internal hashing algorithm to produce repeatable and consistent masked values.By default, DataMasque ties this deterministic behaviour to a specific DataMasque application instance, so the exact same ruleset and

run_secretwill yield different deterministic values if transferred to a different DataMasque instance, making it more difficult to identify masked values for a given hash input. The admin user may rotate the instance secret that determines the masking behaviour for the DataMasque instance from the Settings page. After rotating the instance secret, subsequent deterministic masking will be based on a new instance secret, and existing rulesets will produce different masking results. For added security, the instance secret cannot be viewed or reverted to a previous value, so please ensure you no longer require consistency with earlier masking runs before rotating the instance secret.

Example

This example ruleset will mask the date_of_birth column with a date value that has been deterministically generated

based on the hash of date_of_birth and first_name column values combined with the run's run_secret.

For example, in every row where date_of_birth = '2000-01-01' and first_name = 'Carl', the date_of_birth will be

replaced with a deterministically generated value such as 1986-05-11. This same replacement value will be generated

during each repeated masking run, including masking across different data sources (such as Oracle and Microsoft SQL

Server databases).

version: '1.0'

tasks:

- type: mask_table

table: users

key: user_id

rules:

- column: date_of_birth

hash_columns:

- date_of_birth

- first_name

masks:

- type: from_random_datetime

min: '1980-01-01'

max: '2000-01-01'

Show result

| Before | After |

|

|

|---|

Different use cases will require consistency of masking across different scopes (e.g. across databases, across time periods, across DataMasque instances). We recommend the following approaches to address various consistent masking requirements:

We recommend the following approaches to achieving masking consistency across databases, time, and DataMasque instances:

- For consistent masking across multiple masking runs (which may target different databases), specify the same

run_secretin the options of each run. If you are starting runs via the API, you can leave therun_secretunset to allow it to be automatically generated, and then use the value returned in the API response when starting subsequent masking runs. You should discard therun_secretas soon as you have started all of the runs that you require to be consistent, as prolonged storage of therun_secretrisks it being acquired by an attacker. - For consistent masking over a longer period of time (e.g. all masking runs over the course of a week), you may

need to store the

run_secretfor re-use when starting each of these runs. For these cases, therun_secretshould be stored securely and rotated as frequently as your use case allows. - For consistent masking across DataMasque instances, you can disable the instance secret in the options for a

specific run. Runs performed on different DataMasque instances (of the same version) with the instance secret

disabled and with the same

run_secretwill have consistent masking. However, disabling the instance secret will mean that an attacker with access to the masking ruleset andrun_secretcould potentially reproduce your masking results on a different DataMasque instance. Therefore, you should ensure therun_secretis shared securely between the DataMasque instances and ensure that therun_secretis regularly rotated.

Host security

It is recommended to deploy DataMasque on a dedicated VM/server and apply the standard security practices of your organisation to the host VM/server. Such best practices include, but are not limited to:

- Use of a network firewall

- Strict access control to the host OS

- Regular OS security patching

- Host filesystem encryption

- Intrusion detection

- Virus scanning

Automation

Masking runs can be triggered programmatically via the DataMasque API.

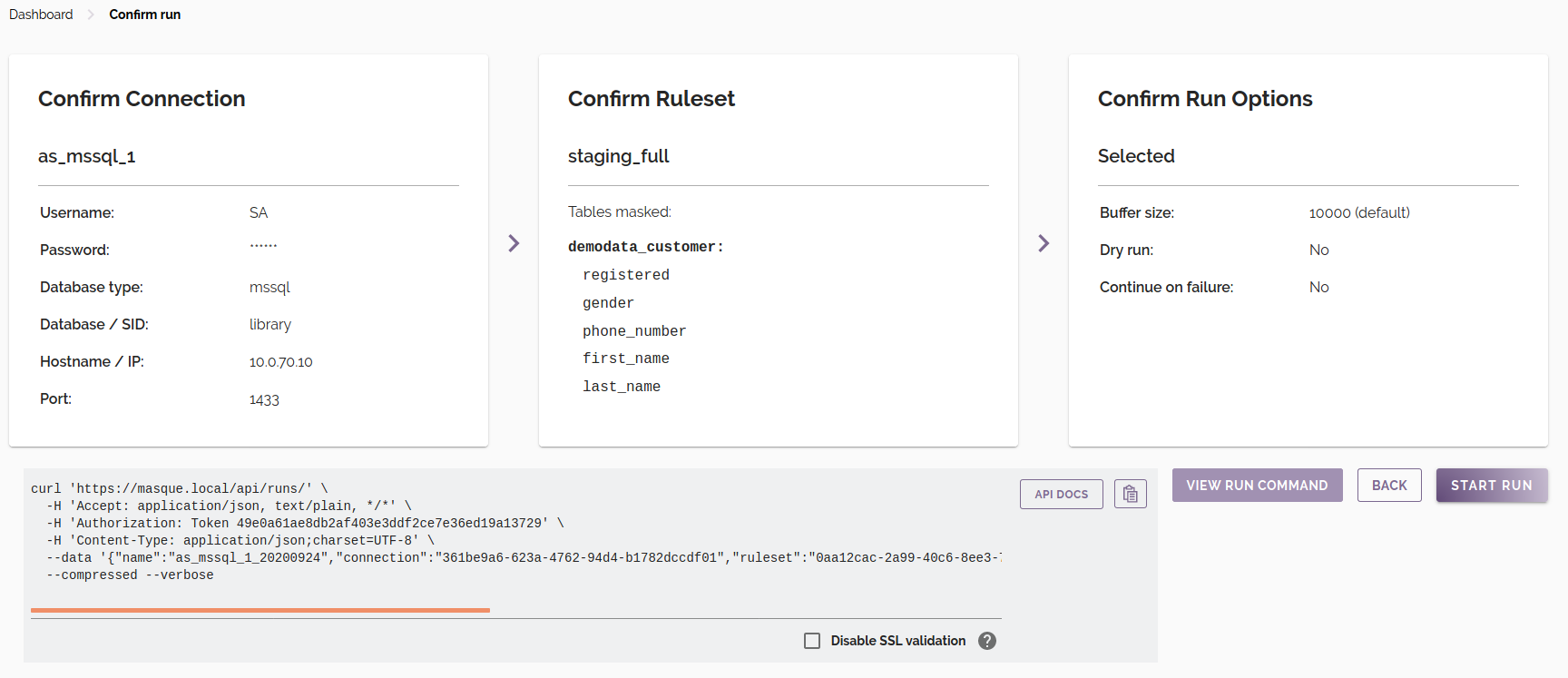

curl commands

The Preview and

Confirm Run screen provides an example curl command that can be used to execute a masking run with the same configuration. To view the command, click the View Run Command button.

Note:

curlwill not accept DataMasque's default self-signed SSL certificate unless it is manually installed on your client machine. In the case where you do not have your own SSL certificate installed, you will need to check the 'Disable SSL validation' checkbox to include the--insecureflag in the generatedcurlcommand.

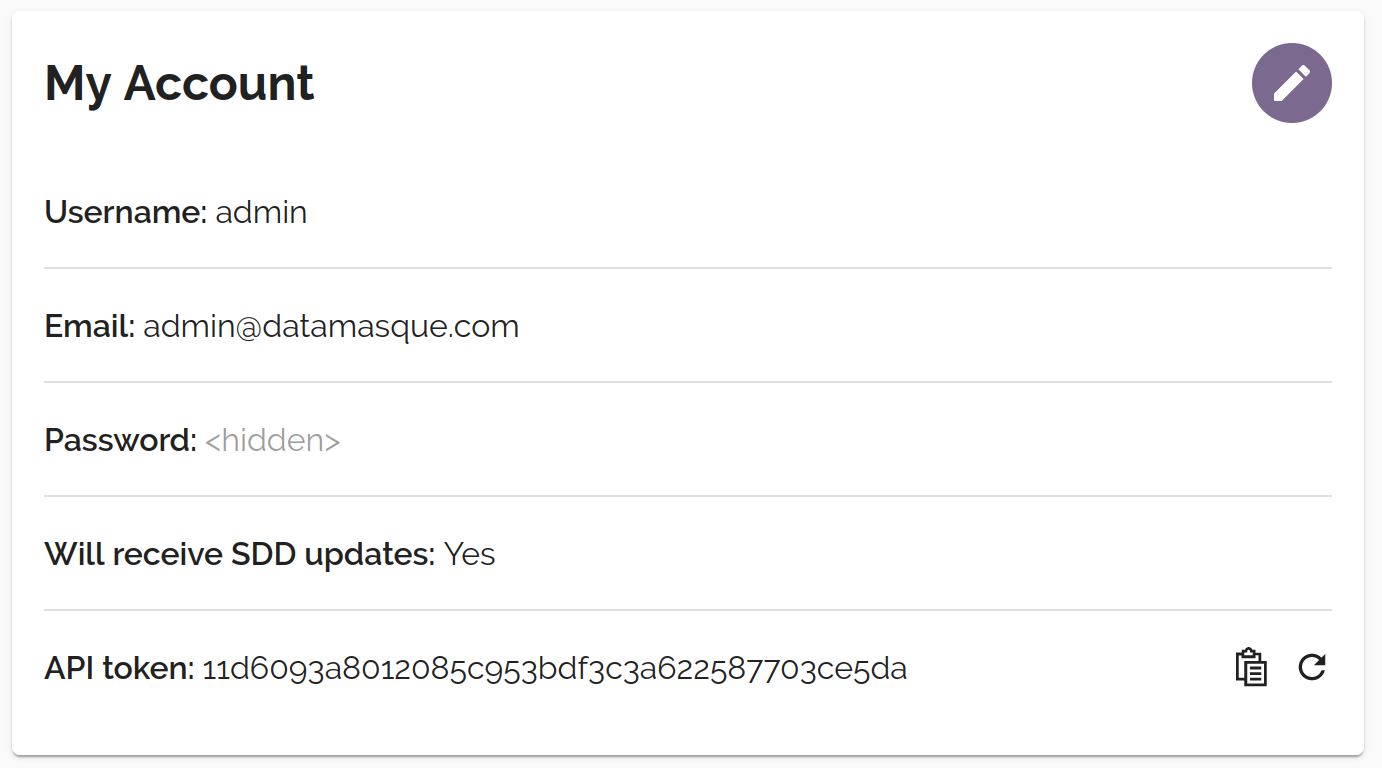

Authentication

The DataMasque API uses token-based authentication. You can get your token from the My Account screen:

You must include the token in the Authorization header of all API requests:

GET /runs/123

Authorization: Token <your-api-token>

Accept: application/json

Endpoints

The following API endpoints are available:

| Method | URL | Description |

|---|---|---|

GET |

/runs/ |

Get a list of all masking runs |

POST |

/runs/ |

Create and start a masking run |

GET |

/runs/{id}/ |

Get the current status of a masking run |

GET |

/runs/{id}/logs/ |

Get the logs for a masking run |

POST |

/runs/{id}/cancel/ |

Cancel a masking run |

For more detailed information on these endpoints please refer to the API Reference.

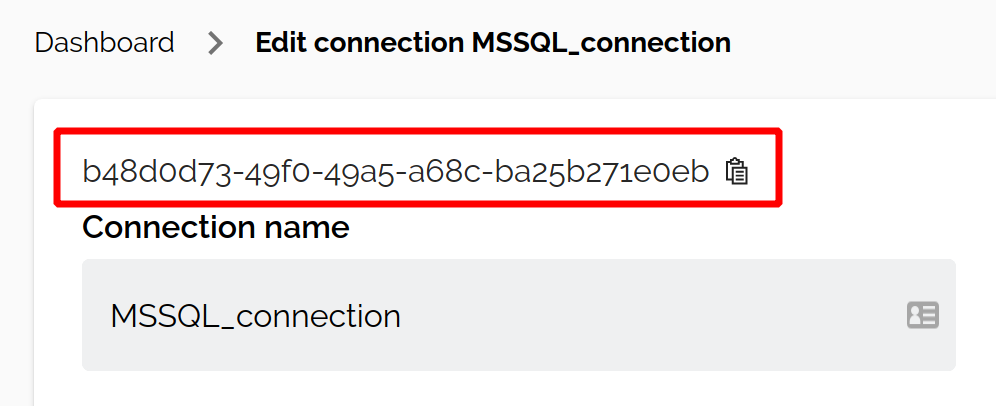

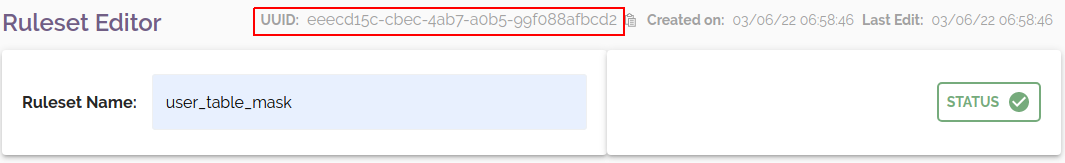

UUIDs

Runs can be triggered with the API using any existing connection and ruleset specified by UUID. You can find the UUID for any connection or ruleset by opening it for editing from the Dashboard. The UUID is located at the top of the page:

Example script

Assuming the corresponding connection and ruleset have been configured in DataMasque, the following script will schedule a masking run and wait until it reaches a finalised status.

| Environment Variables | |

|---|---|

DATAMASQUE_HOST |

The URL of your DataMasque instance (including port) |

DATAMASQUE_API_TOKEN |

Your DataMasque API token (see API Reference) |

CONNECTION_UUID |

The UUID of the database connection that masking will be applied to |

RULESET_UUID |

The UUID of the masking ruleset that will be used in the masking run |

RUN_ID=$(curl $DATAMASQUE_HOST'/api/runs/' \

-H 'Accept: application/json, text/plain, */*' \

-H 'Authorization: Token '$DATAMASQUE_API_TOKEN \

-H 'Content-Type: application/json;charset=UTF-8' \

--data '{"name":"api_masking_run","connection":"'$CONNECTION_UUID'","ruleset":"'$RULESET_UUID'","options":{"dry_run":false,"batch_size":"50000"}}' \

--compressed -k | jq -r '.id')

echo $(date -Iseconds): RUN_ID $RUN_ID created!

STATUS=''

spin='-\|/'

index=0

while [[ $STATUS != 'finished' && $STATUS != 'failed' && $STATUS != 'cancelled' ]]; do

STATUS=$(curl -s $DATAMASQUE_HOST'/api/runs/'$RUN_ID'/'\

-H 'Accept: application/json, text/plain, */*'\

-H 'Authorization: Token '$DATAMASQUE_API_TOKEN\

-k\

| jq -r '.status');

index=$(( ($index+1) %4 ))

printf "\r${spin:$index:1}"

sleep 1;

done

printf "\r$(date -Iseconds): RUN_ID $RUN_ID $STATUS!"

Or alternatively use following Python script (with requests library) to schedule a masking run and wait until it reaches a finalised status:

import requests

import json

import datetime

import sys

import time

DATAMASQUE_HOST = 'https://datamasque-app-host-name'

DATAMASQUE_API_TOKEN = 'datamasque-api-token'

CONNECTION_UUID = 'connection-uuid'

RULESET_UUID = 'ruleset-uuid'

def spinning_cursor():

while True:

for cursor in '|/-\\':

yield cursor

run_payload = json.dumps({

"name": "api_masking_run",

"connection": CONNECTION_UUID,

"ruleset": RULESET_UUID,

"options": {

"batch_size": "50000",

"dry_run": False

}

})

headers = {

'Authorization': 'Token ' + DATAMASQUE_API_TOKEN,

'Content-Type': 'application/json'

}

response = requests.request("POST", DATAMASQUE_HOST + '/api/runs/', headers=headers, data=run_payload)

response.raise_for_status()

run_id = response.json()['id']

print(datetime.datetime.now(), 'RUN ID {} created'.format(run_id))

status = ''

spinner = spinning_cursor()

while status not in ['finished', 'failed', 'cancelled']:

sys.stdout.write(next(spinner))

sys.stdout.flush()

time.sleep(1)

sys.stdout.write('\b')

response = requests.request("GET", '{}/api/runs/{}/'.format(DATAMASQUE_HOST, run_id), headers=headers)

response.raise_for_status()

status = response.json()['status']

print(datetime.datetime.now(), 'RUN ID {} {}!'.format(run_id, status))