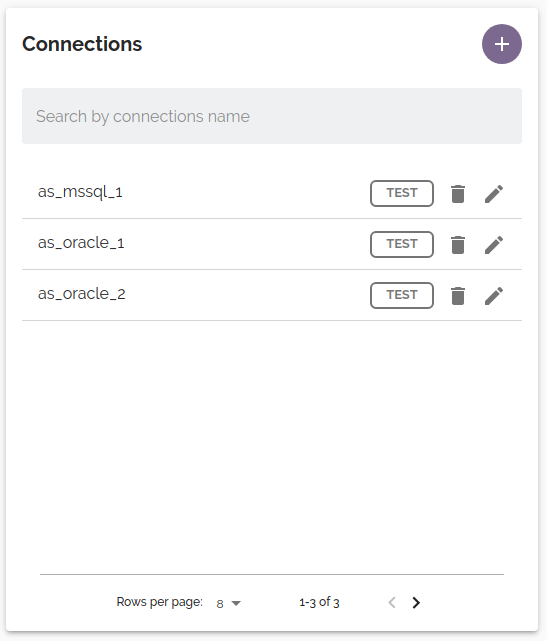

Connections

Connections define the databases that are available for DataMasque to run masking jobs against.

- Supported data sources

- Supported data types

- Add a new connection

- View and edit connection

- Delete connection

- Encrypted connections

- Required privileges

Supported data sources

DataMasque supports Oracle, Microsoft SQL Server, PostgreSQL, Amazon Redshift, Amazon DynamoDB and MySQL databases.

| Databases | Versions |

|---|---|

| Amazon Aurora | - |

| Amazon DynamoDB | - |

| Amazon RDS | - |

| Amazon Redshift | - |

| Microsoft SQL Server | 2012, 2014, 2016, 2017, 2019 |

| Microsoft SQL Server (Linked Server)* | 2019 |

| MySQL | 5.7, 8.0 |

| Oracle | 11gR2, 12gR1, 12gR2, 18c, 19c |

| PostgreSQL | 9.6, 10, 11, 12, 13 |

The Masking Process

For relational databases listed below, DataMasque masks in place, that is, it directly replaces data in the target database with masked data.

Microsoft SQL Server

MySQL

Oracle

PostgreSQL

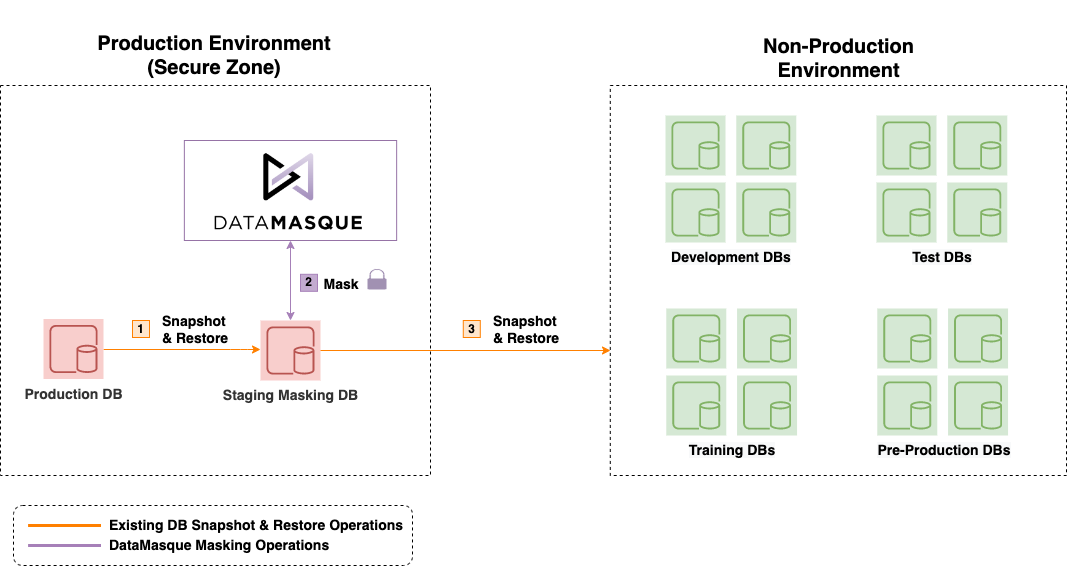

This diagram shows the high level reference deployment process.

DataMasque is expected to run in the secure production zone, and mask a clone of production data. Once masking is complete, the masked clone can itself be cloned into non-production environments.

For some databases, the process is different. These special cases are listed below.

Amazon DynamoDB Masking Process

Amazon DynamoDB databases are masked by exporting the table to an S3 bucket, splitting the exported files into batch files of manageable sizes, masking the data, and then importing into a new Amazon DynamoDB table with a new name. The existing table is retained. This method is used because table scans and bulk updates of Amazon DynamoDB are not performant.

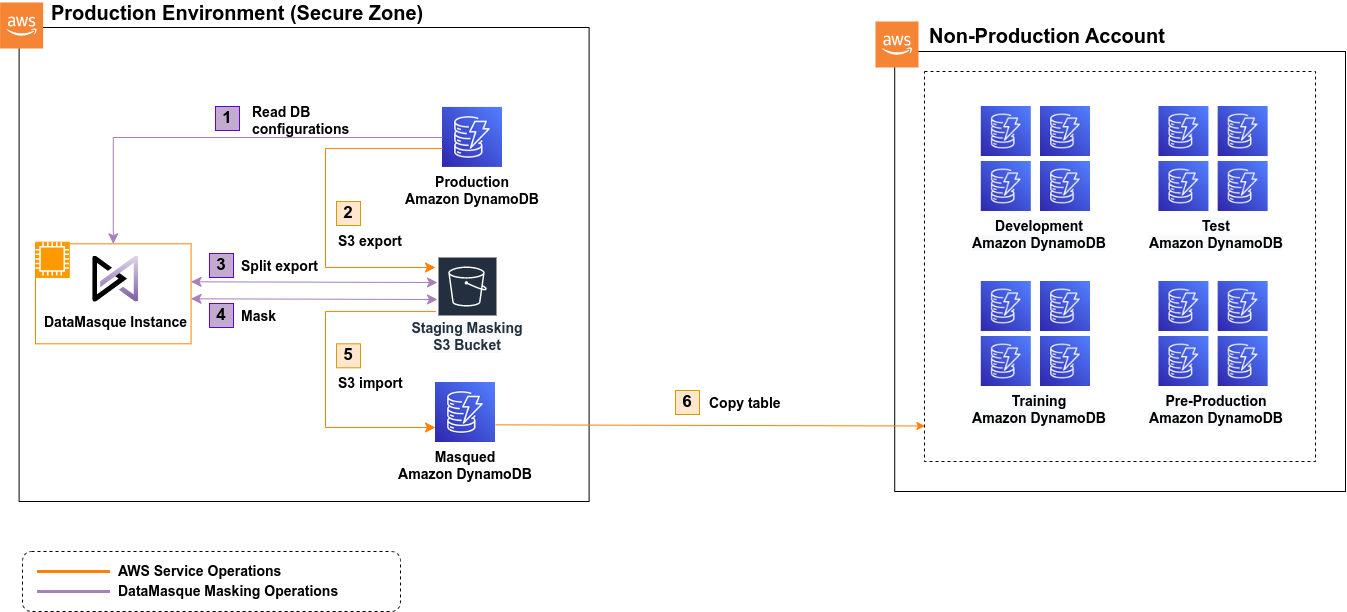

The following diagram shows the high level data masking workflow for Amazon DynamoDB:

The data in the S3 bucket is removed at the end of a successful masking run.

Important! Since this staging bucket will have sensitive data stored in it temporarily, access to the bucket should be confined to trusted users/IAM roles only.

Due to the speed of Amazon DynamoDB Data Export and Amazon DynamoDB Data Import, masking in Amazon DynamoDB can take longer than standard SQL database masks. Most of this time is taken up by the Amazon DynamoDB import and export processes, which are internal to AWS and so DataMasque has no control over their performance. Masking a table with a single item can take up to ten minutes, while masking 1,000,000 items takes around 20 minutes, so the time does not scale linearly with the number of items.

Warning!: On failed or cancelled masking runs, the staging S3 bucket should be checked and left over export data may need to be manually cleaned up. This is because DataMasque might not be able to clean up exported data as it depends on when and how the masking run failed. For example, if an export is in process when a run is cancelled then AWS may continue to execute it and export data.

If a run fails during the import process, the target table may still have been created with no items. Check the AWS Management Console for Amazon DynamoDB and delete the table if it exists.

For more information please refer to the AWS Documentation for Amazon DynamoDB

Amazon Redshift Masking Process

Amazon Redshift databases are masked by exporting the table to an S3 bucket, then masking the data and inserting it into

a new table with the same name, and the old table is dropped. This is done with the use of the UNLOAD and COPY

commands. Therefore, to mask Redshift, an S3 bucket is required. The data in the bucket is removed at the end of a

successful masking run.

Warning! If a run fails or is cancelled, the data is attempted to be deleted from the S3 bucket but this is not always possible (for example, if the server running DataMasque is halted). Therefore, after unsuccessful (failed or cancelled) runs, the staging bucket should be checked manually for leftover exported data.

Important! Since this staging bucket will have sensitive data stored in it temporarily, access to the bucket should be confined to trusted users/IAM roles only.

Microsoft SQL Server (Linked Server) Masking Process

Using Microsoft SQL Server with Linked Server allows the SQL Server to act as a "proxy" to a second server. DataMasque supports masking to IBM DB2 and Sybase Adaptive Server Enterprise linked servers. DataMasque will mask in place on these servers, however the overhead of using Linked Servers means that the masking process is not as performant as with natively supported databases.

Database limitations/unsupported features

Not all databases that DataMasque can connect to support all DataMasque's features. Database specific limitations are listed below:

Amazon DynamoDB Unsupported Features

When using Amazon DynamoDB, the Data Discovery and Schema Discovery features of DataMasque are unsupported.

Joining tables using join syntax is unsupported.

The following tasks are unavailable:

run_sqlmask_unique_keytruncate_tablebuild_temp_tablerun_data_discoveryrun_schema_discoveryparallel

The alternative methods below can be considered in absence of the unavailable features:

The from_unique mask should be used instead of

mask_unique_key.

The where option in mask_table in unsupported.

Conditional masking with if should be used instead.

Amazon Redshift Unsupported Features

When using Amazon Redshift, the following tasks are unavailable:

mask_unique_key

A mask_table task is not supported on tables that are referenced by foreign keys, as the table can't be dropped and

recreated due to breaking this reference.

Important! When the new (masked) table is created, it will not contain the PK constraints, UK constraints or FK

constraints of the source table (although Redshift does not enforce these constraints). NOT NULL constraints are also

not retained.

Microsoft SQL Server (Linked Server) Unsupported Features

When using the Linked Server feature of Microsoft SQL Server, the following tasks are unavailable:

run_sqltruncate_tablebuild_temp_tablemask_unique_key

The Data Discovery and Schema Discovery features of DataMasque are unsupported.

Loading supplementary columns by joining tables in the ruleset is unsupported.

Supported Data Types

Amazon DynamoDB data types

The following Amazon DynamoDB data types are supported.

| Data type | Description |

|---|---|

| string | Unicode string with UTF-8 binary encoding. Can be a length of zero, or up to 400KB of data. |

| number | Numerical value with 38 digits of precision. |

| boolean | Can be true or false. |

| null | Represents an attribute with an unknown or undefined state. |

| list | Stores and ordered collection of values. Should be masked with a json mask. |

| map | Stores and unordered collection of name-value pairs. Should be masked with a json mask. |

| binary (partial support) | Store any binary data up to 400KB. While DataMasque does not have mask types for manipulating binary data, values from binary columns can be copied to other columns or replaced with non-binary values (e.g. null). |

Amazon Redshift data types

The following Amazon Redshift data types are supported.

| Data type | Description |

|---|---|

| char(n) | Fixed-length character data of n size. |

| nvarchar(n) | Variable-length character data of n size. |

| numeric, decimal | Numerical value with decimal precision. |

| int8, bigint | 64 bit integer value. |

| int, int4, integer | 32 bit integer value. |

| int2, smallint | 16 bit integer value. |

| float4, real | 32 bit numerical value with 6 decimal digits of precision. |

| float8, double precision | 64 bit numerical value with 15 decimal digits of precision. |

| bool, boolean | true or false. |

| date | Date value with no time. |

| timestamp | Timestamp including both a date and time. |

| timestamp with time zone | Timestamp including time zone. |

Microsoft SQL Server data types

The following Microsoft SQL Server data types are supported.

| Data type | Description |

|---|---|

| char(n) | Fixed-length character data of n size. |

| varchar(n) | Variable-length character data of n size. |

| text | 32 bit string value. |

| nvarchar(n) | Variable-length character data of length n characters or bytes using national character set. |

| nchar(n) | Fixed-length character data of length n using national character set. |

| nvarchar2(n) | Variable-length character data of length n characters or bytes using national character set. |

| decimal(p,s) | Numerical value with p precision and s decimal places. |

| bigint | 64 bit integer value. |

| int | 32 bit integer value. |

| smallint | 16 bit integer value. |

| tinyint | 8 bit integer value. |

| float, real | Floating point number. |

| money | Currency value. |

| date | Date value with no time. |

| datetime | Date including a time. |

| datetimeoffset | Date and time, including time zone. |

| datetime2 | Date that is combined with a time of day based on 24-hour clock. |

| xml | XML data. |

Important! When attempting to mask

textdata type in Microsoft SQL Server, 2GB will be allocated for each text element when determining the maximum size of the element. To ensure that masking can run correctly, please ensure enough memory is available to the DataMasque instance.

MySQL data types

The following MySQL data types are supported.

| Data type | Description |

|---|---|

| char(n) | Fixed-length character data of n size. |

| varchar(n) | Variable-length character data of n size. |

| text | 32 bit string value. |

| decimal, numeric | Numerical value with decimal precision. |

| tinyint | 8 bit integer value. |

| smallint | 16 bit integer value. |

| mediumint | 24 bit integer value. |

| int | 32 bit integer value. |

| bigint | 64 bit integer value. |

| float | 32 bit floating point value. |

| double | 64 bit floating point value. |

| date | Date value with no time. |

| datetime, timestamp | Date value with a time. |

| time | Time value. |

| json | For storing JSON (JavaScript Object Notation) data. |

Important! MySQL

autoincrementprimary keys are not currently supported for themask_unique_keytask type.

Oracle data types

The following Oracle data types are supported.

| Data type | Description |

|---|---|

| char(n) | Fixed-length character data of 'n' size. |

| varchar2(n) | Variable-length character data of n size. |

| nvarchar2(n) | Variable-length character data of length n characters or bytes using national character set. |

| nchar(n) | Fixed-length character data of length n using national character set. |

| long | Variable-length character data. |

| float, number | Floating point number. |

| int | Whole 32 bit number. |

| date | Date not including time. |

| timestamp | Timestamp including both a date and a time. |

| timestamp with time zone | Timestamp including time zone. |

| timestamp with local time zone | Timestamp including local time zone. |

| xml type | XML data. |

PostgreSQL data types

The following PostgreSQL data types are supported.

| Data type | Description |

|---|---|

| char(n) | Fixed-length character data of n size. |

| varchar(n) | Variable-length character data of n size. |

| text | 32 bit string value. |

| decimal, numeric | Numerical value with decimal precision. |

| bigint, int8 | 64 bit integer value. |

| int, int4, integer | 32 bit integer value. |

| int2, smallint | 16 bit integer value. |

| float4, real | 32 bit numerical value with 6 decimal digits of precision. |

| double | 64 bit numerical value with 15 decimal digits of precision. |

| money | Currency value. |

| boolean | true or false. |

| date | Date value with no time. |

| timestamp | Timestamp including both a date and time. |

| timestamp with time zone | Timestamp including time zone. |

| json | For storing JSON (JavaScript Object Notation) data. Stores an exact copy of the input text. |

| jsonb | For storing JSON (JavaScript Object Notation) data. Stored in a decomposed binary format. |

| xml | XML data. |

Add a new connection

To add a connection, click the ![]() button

from the Connections panel of the Database Masking Dashboard.

button

from the Connections panel of the Database Masking Dashboard.

Use the form to configure the connection parameters for your database. You may validate that the connection parameters are correct using the Test Connection button. This will attempt a database connection using the provided parameters. If the connection fails, an error will be shown with the failure details. The Test button is also available from the dashboard.

Connection Parameters

Standard parameters

| Connection name | A unique name for the connection across the DataMasque instance. May only contain alphanumeric characters and underscores. |

| Database type | The type/engine of the database. Available options: Oracle, Microsoft SQL Server, Microsoft SQL Server (Linked Server), PostgreSQL, Amazon Redshift, MySQL. |

| Username | The username DataMasque will use to authenticate with the database server. |

| Password | The password DataMasque will use to authenticate with the database server. |

| Database / SID | The database name (or SID for Oracle databases). |

| Hostname / IP | The hostname or IP address of the database server. |

| Port | The port of the database server. |

Note: For AWS Aurora databases, use cluster endpoint for the

Database / SIDField.

Database Specific parameters

Amazon Redshift specific parameters

For Amazon Redshift databases, the following additional fields are required:

| S3 bucket name | Name of the S3 bucket to UNLOAD to and COPY from. |

| Amazon Redshift IAM Role | ARN of an IAM Role that allows Amazon Redshift to access the S3 bucket. |

Note: see the section Amazon Redshift additional configuration for details on these fields.

Amazon DynamoDB specific parameters

For Amazon DynamoDB databases, the following additional fields apply:

| S3 bucket name | Name of the S3 bucket to temporarily store Amazon DynamoDB exports and imports. |

| Encryption key management | The method of key management used for server-side encryption of the created masked table. |

| KMS key ID (optional) | The ID of the key to use for SSE, if using an account-managed key. |

Amazon DynamoDB data is exported into a directory called, AWSDynamoDB in the root of the S3 bucket specified. This directory

will be created as part of the export process.

For details on the Encryption key management and KMS key ID fields, see the section Amazon DynamoDB Encrypted Connections.

Specifying Amazon DynamoDB tables

The Amazon DynamoDB tables to mask are not specified as part of the connection settings, instead they are specified in the ruleset YAML. Refer to the table masks documentation for information on specifying table names.

Advanced settings

Amazon DynamoDB Advanced Settings

Masking of Amazon DynamoDB creates a new table with a name based on the source table. The following fields control how the table is named, and removal of existing tables:

| Masked Table Name: Suffix | Masked tables are created as the source table with a random suffix. Enter a suffix to use instead of a random one. |

| Masked Table Name: Append datetime in ISO format | Append the date and time of the masking run to the new table name (as well as a suffix, if specified). |

| Replace existing tables | If checked, DataMasque will replace tables with the same name; otherwise, the masking run will fail if tables already exist with the generated name. |

Example Generated Table Names

In this example, masking will be applied to an Amazon DynamoDB table named users. This table shows example new table names

based on different combinations of the advanced settings.

| Suffix | Append datetime in ISO format | Result | New table name |

|---|---|---|---|

| unspecified | Not checked | Random suffix added | users-603d015a1 |

-masked |

Not checked | Specified suffix added | users-masked |

| unspecified | Checked | Datetime added | users-202303071922 |

-masked |

Checked | Suffix and datetime added | users-masked-202303071922 |

Note that the suffix is added verbatim: the leading dash is part of the suffix as a seperator. This may be omitted if no seperator is desired. A dash is always added before the ISO date.

Replace existing tables setting

Sometimes the name of the Amazon DynamoDB masked table may already exist. It is not very likely to happen when using a random suffix or appending the current date and time, but when specifying a suffix and masking a table more than once, the new table will already exist (unless it was removed between masking runs).

If Replace existing tables is Checked then an existing table with the same name will be deleted. A new table will be created with masked data.

If Replace existing tables is Not checked, and an existing table with the generated name already exists, then DataMasque will stop the masking run and log an error.

This table summarises the behaviour:

| Replace Existing Tables | Table With Generated Name Exists | Outcome |

|---|---|---|

| Not Checked | No | Table created |

| Not Checked | Yes | Masking run fails |

| Checked | No | Table created |

| Checked | Yes | Existing table deleted and new table created |

Note that since a suffix is always added during masking, the source table will never be deleted as the generated table name will always differ from the source table name.

Amazon Redshift Advanced Settings

For Amazon Redshift databases, the following field is optional:

| Schema | The name of the schema that DataMasque will operate on. |

Microsoft SQL Server and Linked Server Advanced Settings

For Microsoft SQL Server databases (including Linked Servers), the following field is optional:

| Instance | The instance name of the named database instance that DataMasque will connect to. |

Note: If a port number is specified in the connection, the value of the instance will be ignored when connecting. In order to connect to a named instance, the port number must be left blank. By default, named instances are configured on a dynamic port: it is best practise to use a non-default port where possible to minimise possible attack vectors.

Microsoft SQL Server (Linked Server) specific parameters

For Microsoft SQL Server (Linked Server) databases, the following field is required:

| Linked Server | The Identifier for the linked server to retrieve tables. The required format is: <LinkedServerName>.<DatabaseName>.<SchemaName> (where the SchemaName is synonymous with the username for DB2). E.g. DB2_LINK.TESTDB.DB2INST1 or SYBASE_LINK.master.dbo |

MySQL Advanced Settings

For MySQL databases, the following field is optional:

| MySQL SSL Certificate | Choose a MySQL SSL Certificate to enable encrypted connections. These can be uploaded using the Files interface. |

Oracle Advanced Settings

For Oracle databases, the following fields are optional:

| Schema | The name of the schema that DataMasque will operate on. |

| Oracle wallet | Choose an Oracle wallet to enable encrypted connections. Oracle wallets can be uploaded using the Files interface. |

| Service name | The Oracle service name (overrides SID). Required for encrypted connections. |

PostgreSQL Advanced Settings

For PostgreSQL databases, the following field is optional:

| Schema | The name of the schema that DataMasque will operate on. |

View and edit connection

To edit a connection, click the edit (![]() ) button for the connection you wish to edit in the Connections panel of the Database masking dashboard.

) button for the connection you wish to edit in the Connections panel of the Database masking dashboard.

A full list of connection parameters can be found under Connection Parameters.

Delete connection

To delete a connection, open the connection for editing (see View and edit connection) and

click the Delete button. You will be prompted for confirmation before the connection is deleted. A connection can

also be deleted from the dashboard by clicking the trashcan icon.![]()

![]()

Encrypted connections

Amazon DynamoDB Encrypted Connections

All communication to Amazon DynamoDB is encrypted. Various options are available for server-side encryption (SSE) of data at rest.

Encryption settings can be specified at the connection level, which will apply to all tables when a ruleset is executed against that connection. However, the settings can be overridden on a per-table basis, with configuration specified in Run Options before starting a run. This will be looked at in more detail in the next section. First, we will explain what the options mean.

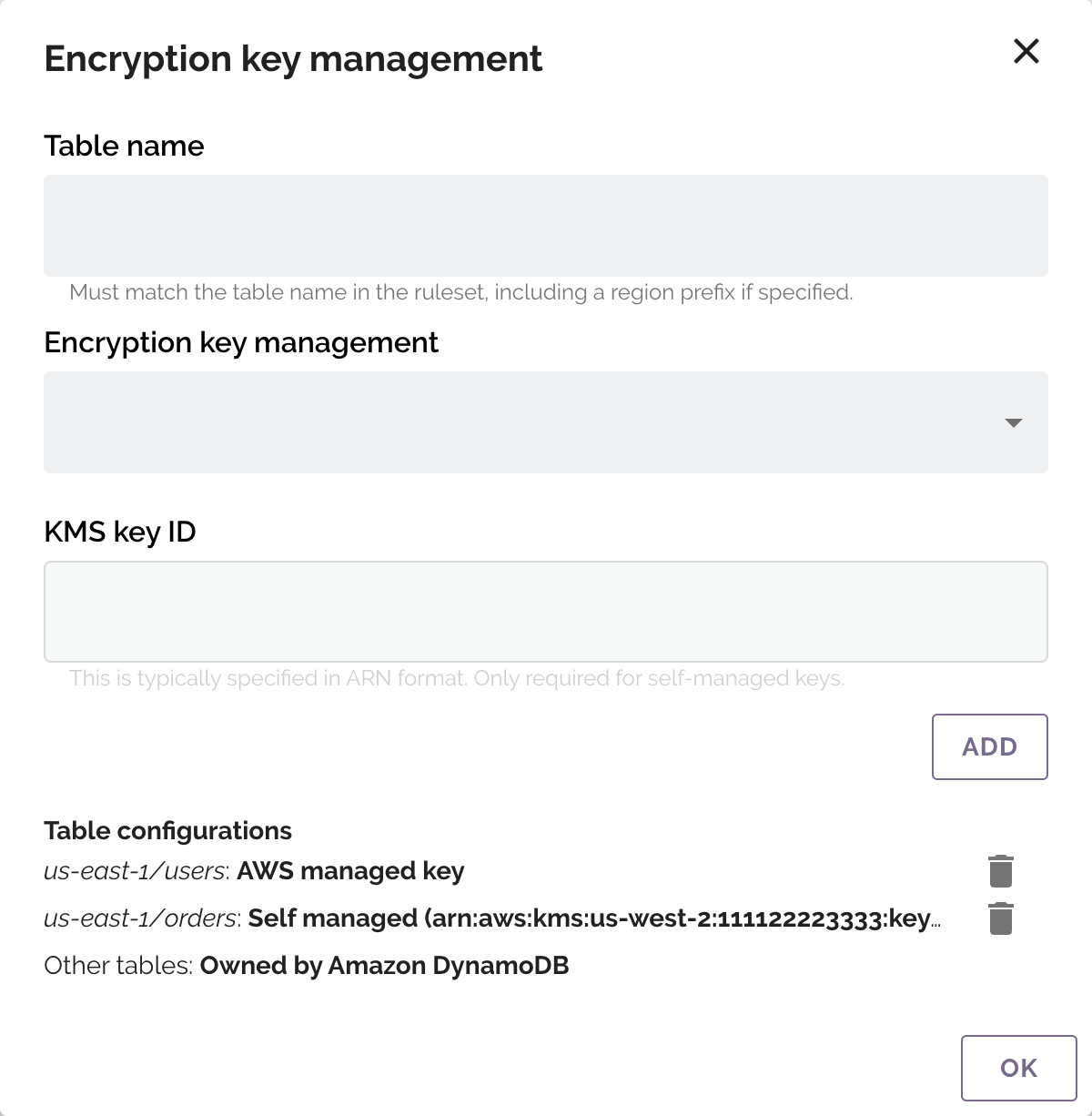

Encryption key management

Amazon DynamoDB has different options for how encryption keys are handled, and you can choose which you would like to use for SSE.

Owned by Amazon DynamoDB

- AWS owned key, specifically owned and managed by Amazon DynamoDB. You are not charged an additional fee for using this key. This is the default setting in the AWS console and for DataMasque.

AWS managed key

- The key is stored in your account and is managed by AWS Key Management Service (AWS KMS). AWS KMS charges apply.

Stored in your account, and owned and managed by you

- The key is stored in your AWS account and is managed by AWS Key Management Service (AWS KMS). AWS KMS charges apply. If choosing this option, a KMS key ID must be specified, and your IAM privileges must grant access to the specified key.

Use configuration from the original table

- Selecting this will use the same key as the source table. AWS do not recommend this. If the original table

specifies AWS managed key or Stored in your account, and owned and managed by you, then AWS KMS charges apply.

Additionally, your IAM profile must grant access to describe the

alias/aws/dynamodbKMS key alias (in order for DataMasque to check whether a given key is managed by AWS or your account). If the original table specifies Stored in your account, and owned and managed by you then your IAM profile must grant access to the KMS key of the original table.

- Selecting this will use the same key as the source table. AWS do not recommend this. If the original table

specifies AWS managed key or Stored in your account, and owned and managed by you, then AWS KMS charges apply.

Additionally, your IAM profile must grant access to describe the

Please also read the Amazon DynamoDB encryption at rest documentation for more explanation on key types in AWS/DynamoDB.

KMS key ID

When selecting the encryption key management option Stored in your account, and owned and managed by you, the ID of

the key must be specified. Typically, this is in an ARN format, for example

arn:aws:kms:us-west-2:111122223333:key/1234abcd-12ab-34cd-56ef-1234567890ab.

Your IAM privileges must grant access to the key. See Advanced SSE Key Permissions for more information.

Specifying SSE Options

SSE options can be configured in the Connection form and these will act as the defaults for all tables in the connection. These settings can be overridden per table, for a run, by specifying per-table settings in the Run Options panel on the Database Masking Dashboard.

Refer to the run options documentation for more information on setting options for a specific masking run.

After selecting an Amazon DynamoDB connection in the Connections list, the Override connection's Server Side Encryption settings checkbox will be available to select. To configure the settings per table, click the gear icon next to this setting, which will show the Encryption key management form.

The Table name should match the table name as specified in your ruleset. If the table name in the ruleset includes an AWS region, then it should also be included here. The options for Encryption key management and KMS key ID match those in the two prior sections.

Amazon Redshift Encrypted Connections

DataMasque supports encrypted connections to Amazon Redshift databases. Use the sslmode connection option to

control the connection encryption. No additional configuration is required in DataMasque.

Important: DataMasque doesn't support

sslmode=verify-caorsslmode=verify-fullfor Amazon Redshift currently. This is on our roadmap and will be included in future releases.

Microsoft SQL Server Encrypted Connections

DataMasque supports encrypted connections to Microsoft SQL Server instances that have the 'Force Protocol Encryption' option enabled. No additional configuration is required in DataMasque - encryption will be initiated by the server.

MySQL Encrypted Connections

DataMasque supports encrypted connections to MySQL databases. Encrypted connections require the use of MySQL SSL Certificates. These certificates can be uploaded through the Files interface.

Certificates must be uploaded as a .zip file containing the following file(s).

| File Name | Description |

|---|---|

| ca.pem | The Certificate Authority (CA) file. |

| client-cert.pem (optional) | The client public key certificate file. |

| client-key.pem (optional) | The client private key file. |

Depending on the server configuration, client-cert.pem and client-key.pem may not be required. For example, AWS RDS

requires only the Certificate Authority.

For more information on configuring MySQL to use encrypted connections, see here: https://dev.mysql.com/doc/refman/8.0/en/using-encrypted-connections.html.

Oracle Encrypted Connections

For Oracle databases, encrypted connections require the use of an Oracle Wallet. Oracle Wallets for your databases can be uploaded and managed through the Files interface.

Once uploaded, the Oracle Wallet to use for a Connection can be chosen under advanced settings.

Supported Oracle Wallet certificate signature algorithms

DataMasque recommends signing the Oracle Wallet certificate using an RSA CA key with one of the following signing

algorithms: sha256, sha384, or sha512. The sha1 algorithm is supported, but not recommended as it is insecure.

The md5 algorithm is unsupported.

Oracle Wallets signed with ECC keys are currently unsupported, however support will be added in future releases of DataMasque.

Note: the default

sign_algismd5in oracle 11g orapki/wallet manager, so it is recommended to explicitly specifysign_algwithsha256,sha384orsha512.

PostgreSQL Encrypted Connections

DataMasque supports encrypted connections to PostgreSQL instances that have the 'SSL mode' option enabled. No additional configuration is required in DataMasque - DataMasque will use encrypted connections with SSL mode preferred.

Note: DataMasque currently doesn't support

sslmode=verify-caorsslmode=verify-fullfor PostgreSQL. This is on our roadmap and will be available in a future release.

Required privileges

DataMasque connections require the following database privileges to perform masking tasks.

Note: Run SQL tasks may require additional privileges, make sure you have granted the right privileges for the connection user to run the scripts.

Amazon DynamoDB connections

Masking an Amazon DynamoDB table has five steps:

- The table to be masked is exported to an S3 bucket.

- DataMasque reads the exported data and masks it.

- The masked data is uploaded back to the same S3 bucket.

- An Amazon DynamoDB import is run to load the masked data into a new table.

- The masked and unmasked data is removed from the S3 bucket.

This process is much faster than masking data in place. Because of this approach, your DataMasque instance requires access to both the Amazon DynamoDB table being masked, and the staging S3 bucket to store the data in transit.

Access control

It is important to restrict access on the S3 bucket you configure to use on your Amazon DynamoDB connection.

- Blocks public access, read the Blocking public access to your Amazon S3 storage guide for information on how to set up public blocks.

- Apply access control to your S3 bucket. The How can I secure the files in my Amazon S3 bucket? guide has information on steps to take to secure your S3 bucket.

Disable object lock

Object Lock must be disabled on your S3 bucket to allow DataMasque to clean up staging data when masking is completed. If Object Lock is not disabled, staging data will be left in your S3 bucket.

Optional configurations

The following configurations are not required, but recommended:

- Disable Bucket Versioning.

For AWS EC2 instances: Follow the steps below to configure the IAM Role which can be attached to the EC2 running DataMasque to allow for masking of Amazon DynamoDB database tables and accessing your S3 bucket:

- Open the Amazon IAM console, create an IAM policy. The following example IAM

policy grants the required privileges for Amazon DynamoDB and S3, where <bucket-name> and <table-name>

should be replaced with the S3 staging bucket and table being masked respectively.

Sidattributes have been added to explain the purpose of each statement. Further explanation of theResourceattributes are given after the example policy.

{

"Version": "2012-10-17",

"Statement": [

{

"Sid": "AllowEventLogging",

"Effect": "Allow",

"Action": [

"logs:CreateLogStream",

"logs:DescribeLogGroups",

"logs:DescribeLogStreams",

"logs:CreateLogGroup",

"logs:PutLogEvents"

],

"Resource": "*"

},

{

"Sid": "ExportedDataAccess",

"Effect": "Allow",

"Action": [

"s3:GetBucketPublicAccessBlock",

"s3:GetBucketPolicyStatus",

"s3:GetBucketObjectLockConfiguration",

"s3:PutObject",

"s3:GetObject",

"s3:GetEncryptionConfiguration",

"s3:ListBucket",

"s3:GetBucketAcl",

"s3:DeleteObject"

],

"Resource": [

"arn:aws:s3:::<bucket-name>",

"arn:aws:s3:::<bucket-name>/*"

]

},

{

"Sid": "SourceTableExport",

"Effect": "Allow",

"Action": [

"dynamodb:DescribeTable",

"dynamodb:DescribeContinuousBackups",

"dynamodb:ExportTableToPointInTime"

],

"Resource": [

"arn:aws:dynamodb:<region>:<account-id>:table/<table-name>"

]

},

{

"Sid": "ExportProcessStatus",

"Effect": "Allow",

"Action": [

"dynamodb:DescribeExport"

],

"Resource": [

"arn:aws:dynamodb:<region>:<account-id>:table/<table-name>/*"

]

},

{

"Sid": "TargetTableImportAndCleanup",

"Effect": "Allow",

"Action": [

"dynamodb:DeleteTable",

"dynamodb:DescribeTable",

"dynamodb:ImportTable",

"dynamodb:UpdateTable"

],

"Resource": [

"arn:aws:dynamodb:<region>:<account-id>:table/<table-name>*"

]

},

{

"Sid": "ImportProcessStatus",

"Effect": "Allow",

"Action": [

"dynamodb:DescribeImport"

],

"Resource": [

"arn:aws:dynamodb:<region>:<account-id>:table/<table-name>*/*"

]

}

]

}

Note: Since the policy above makes use of wildcards (

*) you may receive warnings when adding the policy through the AWS console. Since not all the wildcards can be removed (as explained below) these warning can be ignored.

Since the name of the S3 staging bucket is defined as part of the DataMasque connection, it is static, so it can be hard-coded as part of the policy. The Amazon DynamoDB table names are defined as part of ruleset, so more flexibility is needed when specifying the IAM policy.

The SourceTableExport statement controls access to the source table to get information about it. DataMasque requires

point-in-time-recovery (PITR)/continuous backups to be enabled for a table in order to be able to export it, therefore

it requires permission be able to check that the table has these enabled.

The Resource arguments for the SourceTableExport statement specify the source tables that DataMasque has access to.

Specific tables can be named, or * can be used to allow access to all tables in your account. Multiple Resource

entries can be added to grant access to multiple named tables. Here are some Resource examples:

"arn:aws:dynamodb:*:*:table/*": allow access to all tables in your account."arn:aws:dynamodb:*:*:table/users": allow access an exampleuserstable."arn:aws:dynamodb:us-east-1:123456789012:table/users": allow access an exampleuserstable, in theus-east-1region, for the account123456789012.

The ExportProcessStatus statement contains permissions to allow DataMasque to query the status of the source table's

export process. Under Resource, the source table name can be set, followed by /*. A trailing wildcard is required as

the ID of the export process is unknown until started. A * can be specified instead of a table name to allow access to

export statuses for all tables in your account. Multiple Resource entries can be added to grant access to multiple

named tables. Here are some Resource examples:

"arn:aws:dynamodb:*:*:table/*": allow access to all export processes in your account."arn:aws:dynamodb:*:*:table/users/*": allow access to export processes for just an exampleuserstable."arn:aws:dynamodb:us-east-1:123456789012:table/users/*": allow access to export processes for just an exampleuserstable, in a specific region and account.

The permissions under TargetTableImportAndCleanup allow importing masked data from S3 to the target table. The

Resource attributes make use of the wildcard as sometimes the name of the target table cannot be predicted. The name

of the target table is generated from the source table, with a suffix added. By default, the suffix is random, therefore

the example shows how to specify the source table name followed by a * (i.e. any suffix).

If specifying the suffix as part of the DataMasque connection settings (the Specify Suffix setting), then the suffix can be specified in the policy too.

Multiple Resource entries can be added to grant access to more named tables. Here are some Resoruce examples:

"arn:aws:dynamodb:*:*:table/*": allow access to import into any table."arn:aws:dynamodb:*:*:table/users*": allow access to import into an example tableuserswith any suffix."arn:aws:dynamodb:*:*:table/users-masked": allow access to import into an example tableusers-masked. Must be used in conjunction with the Specify Suffix setting. In this case, the suffix-maskedwas specified."arn:aws:dynamodb:us-east-1:123456789012:table/users-masked": allow access to import into an example tableusers-masked, in a specific region and account. Again, used with the Specify Suffix setting.

Note that the dynamodb:DeleteTable permission is only required if the Replace existing tables option is turned on

in the Amazon DynamoDB connection's Advanced Settings.

The ImportProcessStatus statement contains permissions to allow DataMasque to query the status of the target table's

import process. Under Resource, the source table name can be supplied, followed by a *, to allow any suffix. It is

then followed by /* to allow access to any import process status for that table. As with the export process, the

import process ID is not known until the process is started, so the use of wildcard is necessary.

As with the access to the table itself, if the suffix has been specified as part of the connection settings, it can be used in the policy.

Multiple Resource entries can be added to grant access to multiple named tables. Here are some Resoruce examples:

"arn:aws:dynamodb:*:*:table/*": allow access to all import processes in your account."arn:aws:dynamodb:*:*:table/users*/*": allow access to import processes for auserstable with any suffix."arn:aws:dynamodb:*:*:table/users-masked/*": allow access to import processes for an example tableusers-masked. Must be used in conjunction with the Specify Suffix setting. In this case, the suffix-maskedwas specified."arn:aws:dynamodb:us-east-1:123456789012:table/users-masked/*": allow access to import processes for an example tableusers-masked, in the specified region and account. Again, used with the Specify Suffix setting.

Create an IAM role:

- Select

AWS serviceas the trusted entity,EC2as the service. - Attach the policy created from the previous step to the role.

- Select

Open the Amazon EC2 and associate the IAM role to your EC2.

Important:* EC2 instance are unable to determine the AWS region in which they reside. The region is provided if setting up a

~/.aws/credentialsfile, but not in the case of attaching a policy. In this case, the Amazon DynamoDB table name in the YAML ruleset must be prefixed by the region and a forward slash. For example, the tableusersin regionus-east-1should be specified asus-east-1/users:

version: "1.0"

tasks:

- type: mask_table

table: '"us-east-1/users"'

Advanced SSE Key Permissions

All Amazon DynamoDB tables are server-side encrypted, however the way the encryption keys are managed is configurable. Please refer to Amazon DynamoDB Encryption key management for background on the types of key management available.

There are three scenarios when using DataMasque, where extra permissions are required on the IAM policy to access KMS keys:

- The option Stored in your account, and owned and managed by you is selected when configuring how the target Amazon DynamoDB table should be imported.

- The option Use configuration from the original table is selected when configuring how the target Amazon DynamoDB table should be imported, and the source table uses Stored in your account, and owned and managed by you for key management.

- The option Use configuration from the original table is selected when configuring how the target Amazon DynamoDB table should be imported, and the source table uses AWS managed key for key management.

In these cases, an extra IAM statement should be added to the policy to allow access to the particular keys(s) that the table(s) use:

{

"Sid": "KMSAccess",

"Effect": "Allow",

"Action": [

"kms:CreateGrant",

"kms:DescribeKey",

"kms:Decrypt"

],

"Resource": [

"<key-arn>"

]

}

Note: If directly choosing AWS managed key as the key management type for the new table, no extra permissions are required. The extra permissions are only needed if using the source table's configuration and the source table uses AWS managed key as DataMasque must perform extra checks to verify the actual key ARN of the source table.

<key-arn> should be replaced with the ARN of the key that is to be used. ARNs for keys can be found in the AWS KMS Console. ARNs for keys you have created are found by choosing Customer-managed keys from the sidebar, then clicking the alias or ID of the key you want to use. The ARN is displayed under General configuration. To find the AWS managed key for Amazon DynamoDB, choose AWS managed keys from the sidebar then click the aws/dynamodb alias. The ARN is displayed under General configuration.

For Non-EC2 machines: Please follow the steps for setting up the AWS credentials For non-EC2 machines

Enabling Point-in-time Recovery Backup

Point-in-time Recovery Backup must be enabled in order for DataMasque to perform masking on Amazon DynamoDB. To enable this, follow the instructions in the official AWS Hands-on lab for Amazon DynamoDB.

Config carried over to masked tables

When masking an Amazon DynamoDB table, the new recreated table will have the following properties set to the exact values that the source table was configured with without reconstructing them.

KeySchemaAttributeDefinitions

The following properties will be set to mirror the source table.

BillingMode(Please check the notes below)ProvisionedThroughtput(Please check the notes below)Global Secondary IndexesLocalSecondaryIndexes(Please check the notes below)SSESpecificationTableClass

The following properties WILL NOT be carried over from the source table.

- Any auto-scaling policies.

- IAM policies associated with the table.

- Amazon Cloudwatch metrics and alarms.

- Any tag associated with the table.

- Stream settings.

- Time to Live (TTL) settings.

- Point-In-Time-Recovery(PITR).

- Any properties associated with global tables such as Replication.

- Deletion Protection.

- Backups

Notes:

BillingModedefaults toPROVISIONEDfor masked tables asBillingModeis not returned when callingdescribe_tableonPROVISIONEDtables. WhenBillingModeis set toPAY_PER_REQUEST, this will be carried over to the masked table.ProvisionedThroughtputwill be fixed for imported tables, auto-scaling will not be enabled.LocalSecondaryIndexeswill be carried over from the source table and recreated on the masked table asGlobalSecondaryIndexes. This is due the fact thatLocalSecondaryIndexescan only be added when creating the table and cannot be created when importing the table from S3. TheseGlobalSecondaryIndexeswill be created with the minimum read/write capacity (1/1) as this will affect the cost of Amazon DynamoDB.

Please refer to the AWS Documentation relating to read/write capacity.

Amazon RDS connections

Microsoft SQL Server (AWS RDS)

A DataMasque connection to an AWS RDS Microsoft SQL Server requires the same statements to set up the privileges as a normal Microsoft SQL Server connection. Please refer to MSSQL connections for more information. The AWS RDS for SQL Server Documentation has more information specifically on managing AWS RDS Microsoft SQL Server users and roles.

MySQL (AWS RDS)

A DataMasque connection to an AWS RDS MySQL instance requires the same statements to set up the privileges as a normal MySQL connection. Please refer to MySQL connections for more information. The AWS RDS for MySQL Documentation has more information specifically on managing AWS RDS MySQL users and roles.

Oracle (AWS RDS)

A DataMasque connection from an AWS RDS Oracle instance requires the following permissions executed as an admin user:

GRANT CREATE SESSION, ALTER SESSION, CREATE TABLE TO <connection user>;

ALTER USER <connection user> QUOTA UNLIMITED ON <target data tablespace>;

ALTER USER <connection user> QUOTA UNLIMITED ON <temp tablespace>;

EXECUTE rdsadmin.rdsadmin_util.grant_sys_object( p_obj_name => 'DBA_TABLES',p_grantee => '<connection user>', p_privilege => 'SELECT');

EXECUTE rdsadmin.rdsadmin_util.grant_sys_object( p_obj_name => 'DBA_INDEXES',p_grantee => '<connection user>', p_privilege => 'SELECT');

EXECUTE rdsadmin.rdsadmin_util.grant_sys_object( p_obj_name => 'DBA_CONSTRAINTS',p_grantee => '<connection user>', p_privilege => 'SELECT');

EXECUTE rdsadmin.rdsadmin_util.grant_sys_object( p_obj_name => 'DBA_DATA_FILES',p_grantee => '<connection user>', p_privilege => 'SELECT');

EXECUTE rdsadmin.rdsadmin_util.grant_sys_object( p_obj_name => 'DBA_TAB_COLUMNS',p_grantee => '<connection user>', p_privilege => 'SELECT');

EXECUTE rdsadmin.rdsadmin_util.grant_sys_object( p_obj_name => 'DBA_CONS_COLUMNS',p_grantee => '<connection user>', p_privilege => 'SELECT');

EXECUTE rdsadmin.rdsadmin_util.grant_sys_object( p_obj_name => 'V_$DATABASE',p_grantee => '<connection user>', p_privilege => 'SELECT');

EXECUTE rdsadmin.rdsadmin_util.grant_sys_object( p_obj_name => 'V_$SESSION',p_grantee => '<connection user>', p_privilege => 'SELECT');

Check the AWS RDS Oracle privileges documentation for more information related to AWS RDS Oracle privileges.

PostgreSQL (AWS RDS)

A DataMasque connection to an AWS RDS PostgreSQL instance requires the same statements to set up the privileges as a normal PostgreSQL connection. Please refer to PostgreSQL connections for more information. The AWS PostgreSQL Users and Roles article has more information specifically on managing AWS RDS PostgreSQL users and roles.

Amazon Redshift connections

Database privileges

A DataMasque connection for Redshift requires that the user is the owner of the target tables, and:

TEMPORARYprivileges to the target database.Ensure the connection user is a direct or indirect member of the user that owns the target tables(s).

SELECTprivilege onALL TABLES IN SCHEMA PG_CATALOGandINFORMATION_SCHEMA.COLUMNS.USAGEandCREATEprivileges to the target schema.Ensure the connection user has visibility (in their schema search path) of all tables that will be masked.

GRANT TEMPORARY ON DATABASE <target database> to <connection user>;

GRANT <target table owner user> TO <connection user>;

GRANT SELECT ON INFORMATION_SCHEMA.TABLES TO <connection user>;

GRANT SELECT ON INFORMATION_SCHEMA.COLUMNS TO <connection user>;

GRANT SELECT ON ALL TABLES IN SCHEMA PG_CATALOG TO <connection user>;

GRANT USAGE, CREATE ON SCHEMA <target schema> TO <connection user>;

ALTER USER <connection user> SET SEARCH_PATH TO <target schema>;

GRANT SELECT ON INFORMATION_SCHEMA.TABLE_CONSTRAINTS TO <connection user>;

GRANT SELECT ON INFORMATION_SCHEMA.KEY_COLUMN_USAGE TO <connection user>;

GRANT SELECT ON INFORMATION_SCHEMA.CONSTRAINT_COLUMN_USAGE TO <connection user>;

Amazon Redshift Additional configuration

For masking on Amazon Redshift, DataMasque requires:

- An S3 bucket that is not public, with Object Lock disabled.

- An IAM role with an access policy attached to allow the Amazon Redshift database to access the S3 bucket.

- A dedicated AWS user with programmatic access configured and access policy attached that allows DataMasque to access the S3 bucket.

Configuring the Amazon S3 bucket

Access control

DataMasque uses Amazon S3 buckets as a staging area for processing Amazon Redshift database data. Therefore, it is important to restrict access on the S3 bucket you configure to use on your Amazon Redshift connection.

- Blocks public access, follow this link to enable Block Public Access feature on your S3 bucket.

- Apply access control to your S3 bucket. Follow this link for the steps to secure your S3 bucket.

Disable object lock

Object Lock must be disabled on your S3 bucket to allow DataMasque to clean up staging data when masking is completed. If Object Lock is not disabled, staging data will be left in your S3 bucket and a warning message will be included in the run log.

Optional configurations

The following configurations are not required, but recommended:

- Configure S3 buckets with SSE-S3, server side encryption.

- Disable Bucket Versioning.

Configuring Amazon Redshift database

Configuring access to S3 Bucket

Your Amazon Redshift database must have read-write access to the S3 bucket configured on your connection.

Follow the steps below to configure the Amazon Redshift database that will be accessing your S3 bucket:

- Open the Amazon IAM console, create an IAM policy. The following

example IAM policy shows required permissions, where <bucket-name> should be replaced with the name of your S3

staging bucket.

Sidattributes have been added to explain the purpose of each statement.

{

"Version": "2012-10-17",

"Statement": [

{

"Sid": "BucketContentPermissions",

"Effect": "Allow",

"Action": [

"s3:PutObject",

"s3:GetObject",

"s3:AbortMultipartUpload",

"s3:DeleteObject",

"s3:ListMultipartUploadParts"

],

"Resource": "arn:aws:s3:::<bucket-name>/*"

},

{

"Sid": "BucketMetaDataPermissions",

"Effect": "Allow",

"Action": [

"s3:GetBucketPublicAccessBlock",

"s3:GetBucketPolicyStatus",

"s3:GetBucketObjectLockConfiguration",

"s3:GetEncryptionConfiguration",

"s3:ListBucketMultipartUploads",

"s3:ListBucket",

"s3:GetBucketAcl"

],

"Resource": "arn:aws:s3:::<bucket-name>"

}

]

}

- Create an IAM role:

- Select

AWS serviceas the trusted identity,Redshiftas the service andRedshift - Cutomizableas the use case. - Attach the policy created from the previous step to the role.

- Select

- Open the Amazon Redshift console and associate the IAM role to your Amazon Redshift database.

Reserve storage space for data processing

DataMasque requires additional storage on the database for creating staging tables when masking data. It is crucial to have sufficient storage space on your Amazon Redshift database to accommodate the total size of the tables you will be masking in parallel.

Configuring DataMasque's access to S3 bucket

DataMasque must be able to access the S3 bucket provided. A Profile that grants access to the bucket must be attached to the EC2 instance running DataMasque.

Follow the steps below to configure your DataMasque to access your S3 bucket:

- Open the Amazon IAM console and create an IAM policy. The following

example IAM policy shows required permissions, where <bucket-name> should be replaced with the name of your S3

staging bucket.

Sidattributes have been added to explain the purpose of each statement.

{

"Version": "2012-10-17",

"Statement": [

{

"Sid": "BucketContentPermissions",

"Effect": "Allow",

"Action": [

"s3:PutObject",

"s3:GetObject",

"s3:AbortMultipartUpload",

"s3:DeleteObject",

"s3:ListMultipartUploadParts"

],

"Resource": "arn:aws:s3:::<bucket-name>/*"

},

{

"Sid": "BucketMetaDataPermissions",

"Effect": "Allow",

"Action": [

"s3:GetBucketPublicAccessBlock",

"s3:GetBucketPolicyStatus",

"s3:GetBucketObjectLockConfiguration",

"s3:GetEncryptionConfiguration",

"s3:ListBucketMultipartUploads",

"s3:ListBucket",

"s3:GetBucketAcl"

],

"Resource": "arn:aws:s3:::<bucket-name>"

}

]

}

Create an IAM role:

- Select

AWS serviceas the trusted identity,EC2as the service andEC2underSelect your use case. - Attach the policy created from the previous step to the role.

- Select

Attach the Amazon IAM role created to the target DataMasque instance. You can use the following AWS command to attach an IAM role to an existing EC2 instance.

aws ec2 associate-iam-instance-profile --instance-id <ec2 instance id> --iam-instance-profile Name=<name of IAM role>The AWS CLI

associate-iam-instance-profiledocumentation has more information on this command.

Microsoft SQL Server connections

A DataMasque connection for Microsoft SQL Server requires:

CONNECTandCREATE TABLEpermissions.SELECT,UPDATE,INSERT,ALTERandREFERENCESpermissions to the target schema.Ensure the connection user has the default schema set to the target schema for masking.

USE <target database>;

GRANT CONNECT, CREATE TABLE TO <connection user>;

GRANT SELECT, UPDATE, INSERT, ALTER, REFERENCES ON SCHEMA::<target schema> TO <connection user>;

ALTER USER <connection user> WITH DEFAULT_SCHEMA = <target schema>;

MySQL connections

A DataMasque connection for MySQL requires:

CREATE TEMPORARY TABLESprivileges to the database.CREATE,DROP,INDEX,SELECT,UPDATE,INSERT,ALTERandREFERENCESprivileges to the target tables.

GRANT CREATE TEMPORARY TABLES ON <target database>.* TO <connection user>@<DataMasque host>;

GRANT CREATE, SELECT, UPDATE, INSERT, ALTER, REFERENCES ON <target database>.<target tables> TO <connection user>@<DataMasque host>;

Oracle connections

A DataMasque connection for Oracle requires:

CREATE SESSION,ALTER SESSION,UNLIMITED TABLESPACEandCREATE TABLEsystem privileges.SELECTonDBA_TABLES,DBA_INDEXES,DBA_CONSTRAINTS,DBA_DATA_FILES,DBA_TAB_COLUMNS,V_$DATABASEandV_$SESSION.

GRANT CREATE SESSION, ALTER SESSION, UNLIMITED TABLESPACE, CREATE TABLE TO <connection user>;

GRANT SELECT ON DBA_TABLES TO <connection user>;

GRANT SELECT ON DBA_INDEXES TO <connection user>;

GRANT SELECT ON DBA_CONSTRAINTS TO <connection user>;

GRANT SELECT ON DBA_DATA_FILES TO <connection user>;

GRANT SELECT ON DBA_TAB_COLUMNS TO <connection user>;

GRANT SELECT ON DBA_CONS_COLUMNS TO <connection user>;

GRANT SELECT ON V$DATABASE TO <connection user>;

GRANT SELECT ON V$SESSION TO <connection user>;

If the connection user is not the schema owner:

SELECT,ALTERandUPDATEprivileges to the target table(s).

GRANT SELECT, ALTER, UPDATE ON <target tables> TO <connection user>;

Note:

For

build_temp_tabletasks, you will require additional privileges on the target schema in order to create temporary tables:SELECT ANY TABLE,UPDATE ANY TABLE,CREATE ANY TABLE,ALTER ANY TABLEandDROP ANY TABLEsystem privileges.For

truncate_tabletasks, when the connection user is not the owner of the target tables, you will requireDROP ANY TABLEsystem privilege.For

mask_unique_keytasks, when the connection user is not the owner of the target tables, you will requireCREATE ANY TABLEandDROP ANY TABLEsystem privileges.

PostgreSQL connections

A DataMasque connection for PostgreSQL requires:

CONNECTandTEMPORARYprivileges to the target database.Ensure the connection user is a direct or indirect member of the role that owns the target tables(s).

SELECTprivilege onALL TABLES IN SCHEMA PG_CATALOGandINFORMATION_SCHEMA.COLUMNS.USAGEandCREATEprivileges to the target schema.Ensure the connection user has visibility (in their schema search path) of all tables that will be masked.

GRANT CONNECT, TEMPORARY ON DATABASE <target database> to <connection user>;

GRANT <target table owner role> TO <connection user>;

GRANT SELECT ON INFORMATION_SCHEMA.TABLES TO <connection user>;

GRANT SELECT ON INFORMATION_SCHEMA.COLUMNS TO <connection user>;

GRANT SELECT ON ALL TABLES IN SCHEMA PG_CATALOG TO <connection user>;

GRANT USAGE, CREATE ON SCHEMA <target schema> TO <connection user>;

ALTER USER <connection user> IN DATABASE <target database> SET SEARCH_PATH TO <target schema>;

GRANT SELECT ON INFORMATION_SCHEMA.TABLE_CONSTRAINTS TO <connection user>;

GRANT SELECT ON INFORMATION_SCHEMA.KEY_COLUMN_USAGE TO <connection user>;

GRANT SELECT ON INFORMATION_SCHEMA.CONSTRAINT_COLUMN_USAGE TO <connection user>;